Human Action Recognition by Representing 3D Human Skeletons as Points in a Lie Group

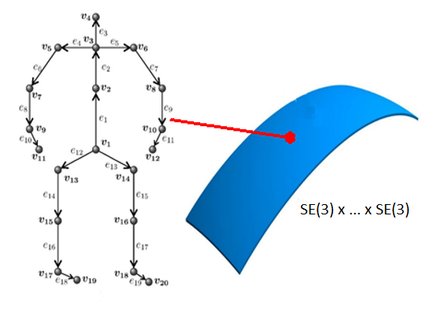

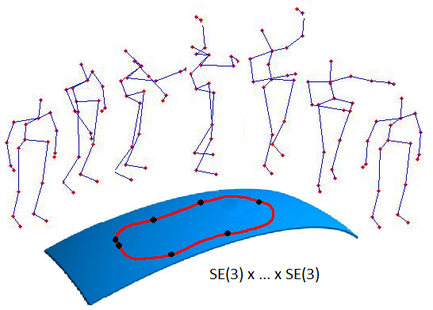

Abstract: Recently introduced cost-effective depth sensors coupled with the real-time skeleton estimation algorithm of Shotton et al. have resulted in a renewed interest in skeleton-based human action recognition. Most of the existing skeleton-based approaches use either the joint locations or the joint angles to represent a human skeleton. In this paper, we propose a new skeletal representation that explicitly models the 3D geometric relationships between various body parts using translations and rotations in 3D space. Since 3D rigid body motions are members of the special Euclidean group SE(3), the proposed skeletal representation lies in the Lie group SE(3) × . . . × SE(3), which is a curved manifold. With the proposed representation human actions can be modeled as curves in this Lie group. Since classification of curves in this Lie group is not an easy task, we map the curves from the Lie group to its Lie algebra, which is a vector space. We then perform classification using a combination of dynamic time warping, Fourier temporal pyramid representation and linear SVM. Experimental results on three action datasets show that the proposed representation performs better than various other commonly-used skeletal representations. The proposed approach also outperforms various state-of-the-art skeleton-based human action recognition approaches.

Contributions

- We represent 3D human skeletons as points in the Lie group SE(3) × . . . × SE(3). The proposed skeletal representation explicitly models the 3D geometric relationships between various body parts using rigid body transformations.

- We show that the proposed representation performs better than many existing skeletal representations by evaluating it on three action datasets: MSR-Action3D dataset, UTKinect-Action dataset and Florence3D-Action dataset.

- We show that the proposed skeletal representation combined with dynamic time warping (DTW), Fourier temporal pyramid (FTP) representation and linear SVM outperforms various state-of-the-art skeleton-based human action recognition approaches.

Experiments

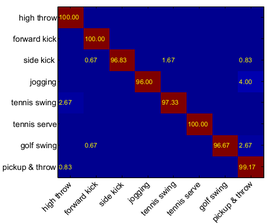

MSR-Action3D dataset

- 3 subsets each consisting of 8 different actions performed by 10 subjects (557 action sequences in total).

- Cross-subject testing: 5 subjects for training and 5 subjects for testing.

|

|

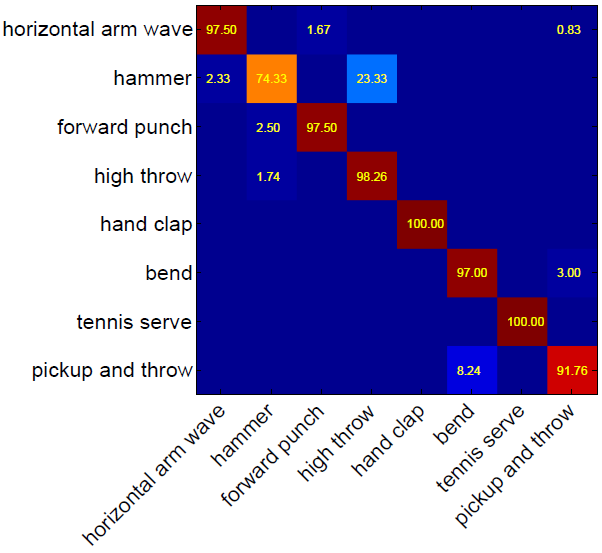

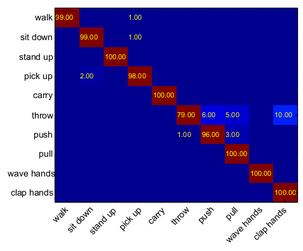

UTKinect-Action dataset

- 199 action sequences, 10 actions, 10 subjects.

- Cross-subject testing: 5 subjects for training and 5 subjects for testing.

|

Confusion matrix

|

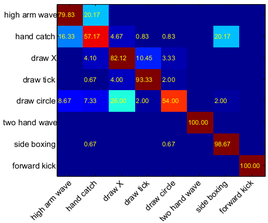

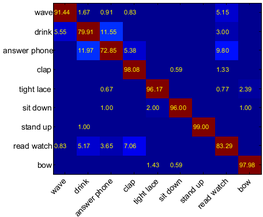

Florence3D-Action dataset

- 215 action sequences, 9 actions, 10 subjects.

- Cross-subject testing: 5 subjects for training and 5 subjects for testing.

|

Confusion matrix

|

Matlab code used for the experiments

Use the below link to download the skeletal feature extraction and action recognition code.

Please cite the below paper if you use this code for your research.

Publications

Raviteja Vemulapalli, Felipe Arrate, and Rama Chellappa, "Human Action Recognition by Representing 3D Human Skeletons as Points in a Lie Group", CVPR, 2014.

[PDF][Presentation-PPT][Presentation-PDF] [Poster] (ORAL)

[PDF][Presentation-PPT][Presentation-PDF] [Poster] (ORAL)